This is a simple, straight-forward style post. [EDIT: lol, I wish – click here to see what I ACTUALLY ended up doing]. I am working with updating my tks “Suite” of tools for Shotgun-Nuke integration, and I wanted to be sure I was doing everything as safely as possible. As such, I wanted to make sure that when a user “hides” the panel in Nuke, my panel cleans up after itself. (I noticed that, by default, when the panel is “closed” with the X box, the panel itself and the thread keep going and going and going in the background).

With some dir() inspection and some super() magic, I was able to determine the following functions to override if you want to add special nuke panel close and open logic:

class NotesPanel( QWidget ):

def __init__( self , scrollable=True):

QWidget.__init__(self)

def hideEvent(self, *args, **kwargs):

#this function fires when the user closes the panel

super(NotesPanel, self).hideEvent(*args, **kwargs)

def showEvent(self, *args, **kwargs):

#this function fires when the user opens the panel

super(NotesPanel, self).showEvent(*args, **kwargs)Short and sweet! Hopefully this will help others who are looking for similar logic.

UPDATED: Well, nothing is ever that simple, is it?

Continue Reading → So I went to work on building a nuke-based shot tracking system, first for artists but then expanding to include supervisors as well. I called it “the dashboard”, and though it started small it quickly became essential to the Molecule’s pipeline. When the studio switched tracking packages to Shotgun, a lot more functionality was exposed and the whole thing just got 50% better. You can catch a quick glimpse of it in this promotional video from Autodesk – at around 0:55 in this video, the awesome Rick Shick talks about how he uses it instead of the web-based interface almost exclusively in his role as comp supervisor:

So I went to work on building a nuke-based shot tracking system, first for artists but then expanding to include supervisors as well. I called it “the dashboard”, and though it started small it quickly became essential to the Molecule’s pipeline. When the studio switched tracking packages to Shotgun, a lot more functionality was exposed and the whole thing just got 50% better. You can catch a quick glimpse of it in this promotional video from Autodesk – at around 0:55 in this video, the awesome Rick Shick talks about how he uses it instead of the web-based interface almost exclusively in his role as comp supervisor:

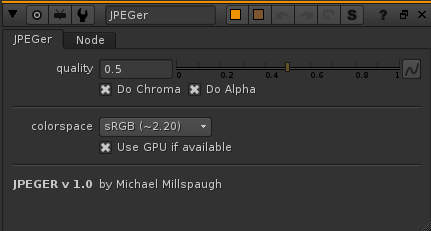

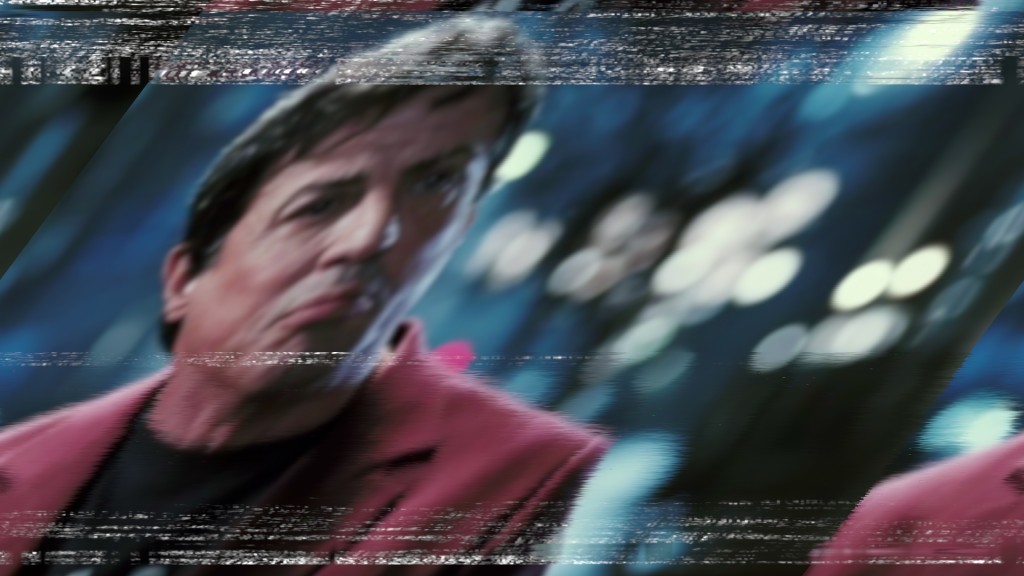

I’ve been annoyed with the basic jpeg nuke workflow for a while – the only way to get that magic compression look was to write it out as a jpeg, and then read it back in. So when I discovered blink scripts last week, building this was a great way to learn.

I’ve been annoyed with the basic jpeg nuke workflow for a while – the only way to get that magic compression look was to write it out as a jpeg, and then read it back in. So when I discovered blink scripts last week, building this was a great way to learn.