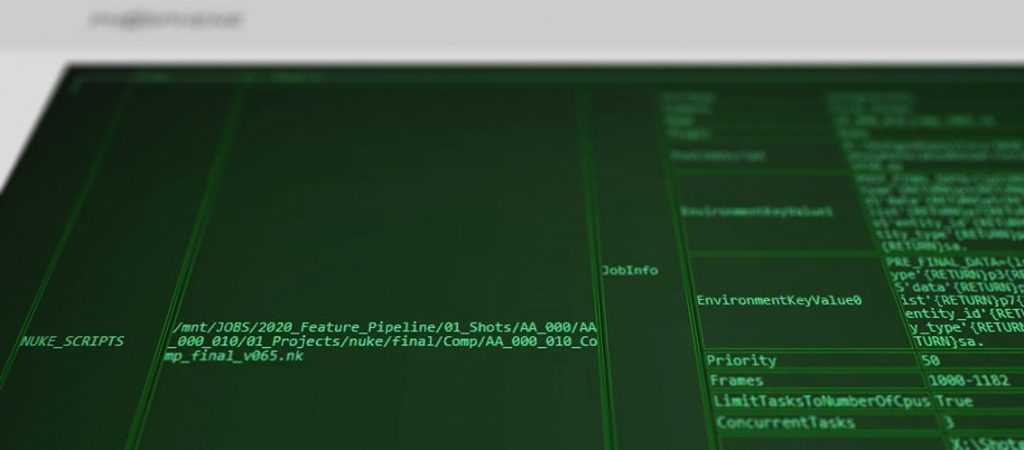

The #1 request every studio wants is the ability to run actions on their pipeline through the Shotgun web interface. Luckily SG allows this through the use of Action Menu Items (AMIs). The jist is: choose an Entity type in the web interface (ie Version), name your new Item (say Render Slates…), and setup an IP address to receive this information. SG then encapsulates the users’ selection of that entity type (either one or multiple entities) and sends the data to the receving machine, re-directing the users’ web browser in the process. With that data & connection, the machine at the other end can perform any action (say, submit all selected shots to the farm) and provide the end user with visual feedback (success, failure).

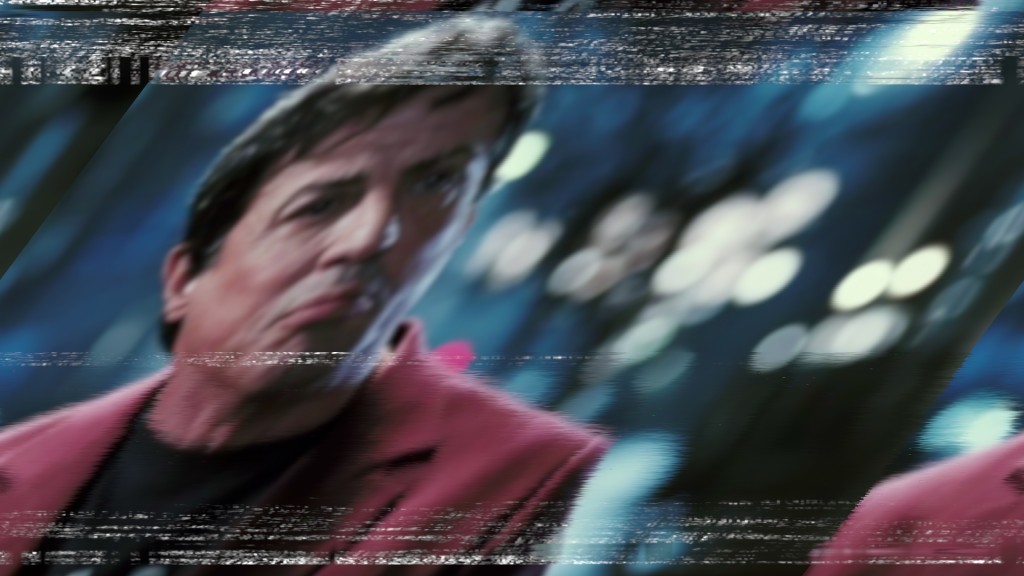

I developed a plugin-based AMI Server (philosophically similar to the Shotgun Event Server) for just this purpose, and I’m very happy with the result. The key is not only to perform the action, but to provide feedback to the user in a friendly and readable way; the user should be able to tell clearly whether the action has succeeded or failed. I even had a friend in CSS design whip me up a nice-looking CRT-style output because, why not 😉

Check out the video below to see it in action!

So I went to work on building a nuke-based shot tracking system, first for artists but then expanding to include supervisors as well. I called it “the dashboard”, and though it started small it quickly became essential to the Molecule’s pipeline. When the studio switched tracking packages to Shotgun, a lot more functionality was exposed and the whole thing just got 50% better. You can catch a quick glimpse of it in this promotional video from Autodesk – at around 0:55 in this video, the awesome Rick Shick talks about how he uses it instead of the web-based interface almost exclusively in his role as comp supervisor:

So I went to work on building a nuke-based shot tracking system, first for artists but then expanding to include supervisors as well. I called it “the dashboard”, and though it started small it quickly became essential to the Molecule’s pipeline. When the studio switched tracking packages to Shotgun, a lot more functionality was exposed and the whole thing just got 50% better. You can catch a quick glimpse of it in this promotional video from Autodesk – at around 0:55 in this video, the awesome Rick Shick talks about how he uses it instead of the web-based interface almost exclusively in his role as comp supervisor:

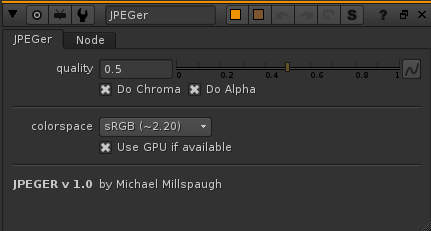

I’ve been annoyed with the basic jpeg nuke workflow for a while – the only way to get that magic compression look was to write it out as a jpeg, and then read it back in. So when I discovered blink scripts last week, building this was a great way to learn.

I’ve been annoyed with the basic jpeg nuke workflow for a while – the only way to get that magic compression look was to write it out as a jpeg, and then read it back in. So when I discovered blink scripts last week, building this was a great way to learn.